Our highly versatile

synthetic data pipeline.

Generating useful training data for AI can be tedious and resource-heavy. Relevant data has to be acquired, preselected and labeled. Our synthetic data pipeline solves this issue by automated scenario generation and physical based sensor processing. All according to our customers use case and data structures.

DATAFACTORY

Our datafactory comprises a fully automated process to generate synthetic data. After parametrizing it to match our customers use case, it is able to generate large quantities of ultra realistic training data for autonomous systems.

ASSETS & SCENE DATA

In order to match the content of real world data in quality, we host an extensive library of high-quality models to populate our metaworlds:

Vehicles

- Buildings

Pedestrians

Traffic signs/lights

Street elements

Vegetation

- Railway

Industrial environments

Many more…

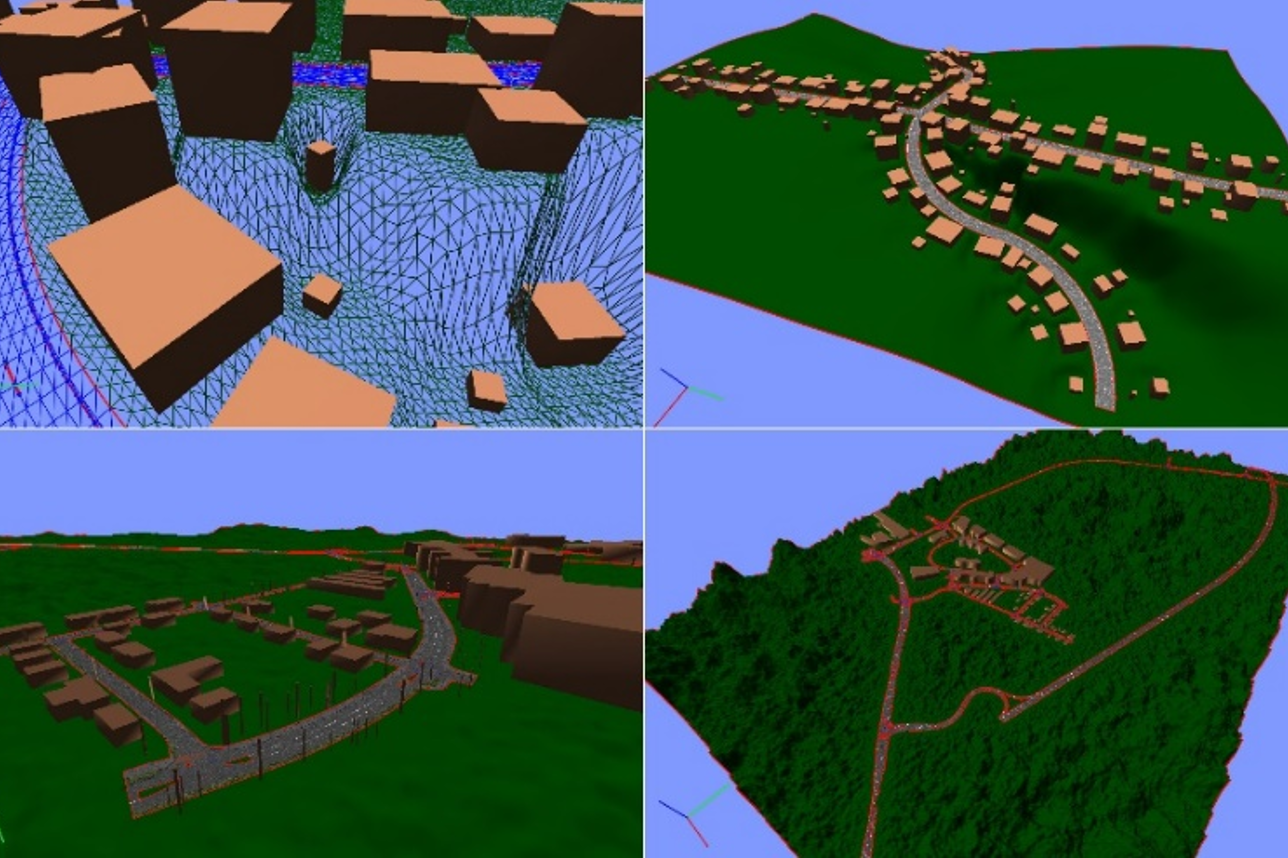

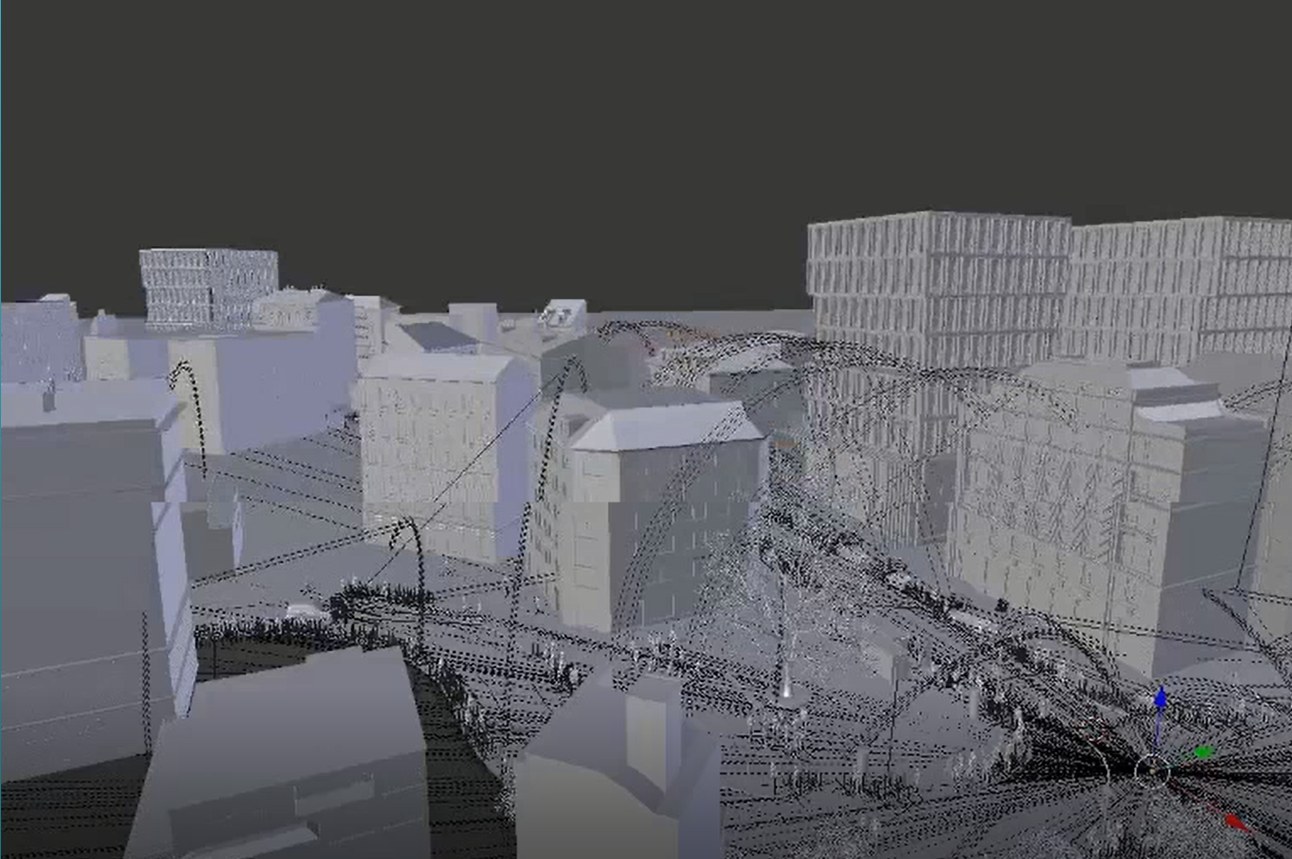

SCENE GENERATION

Our automated scene generation assembles the input data into defined scenarios. This step is shaped by a plethora of tools and parameters:

Procedural algorithms and specialized AI models empower ultra-realistic, diverse and variable environments

Parametrizable lighting, environment, profile of the terrain, density of objects, trajectories, animations and materials

Variations on consecutive image sequences or a frame by frame level

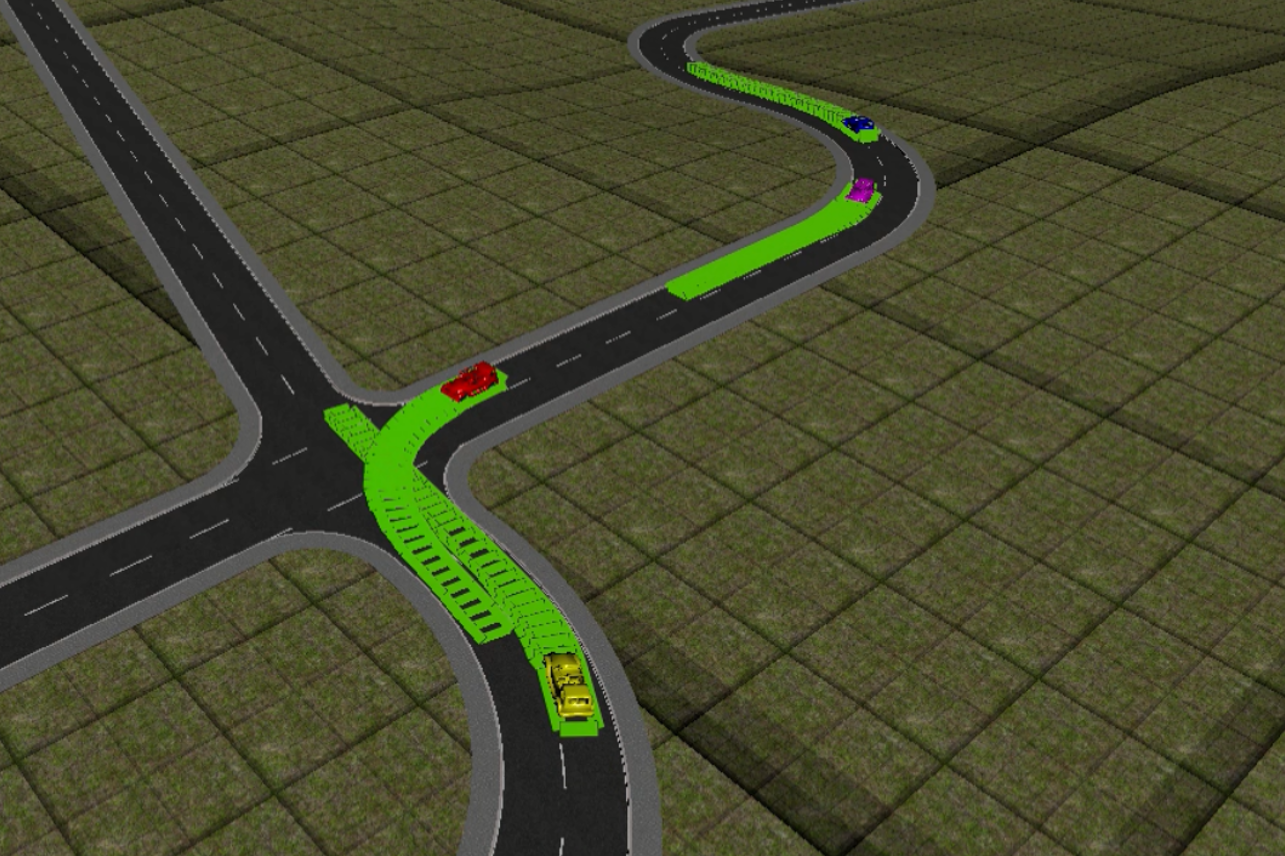

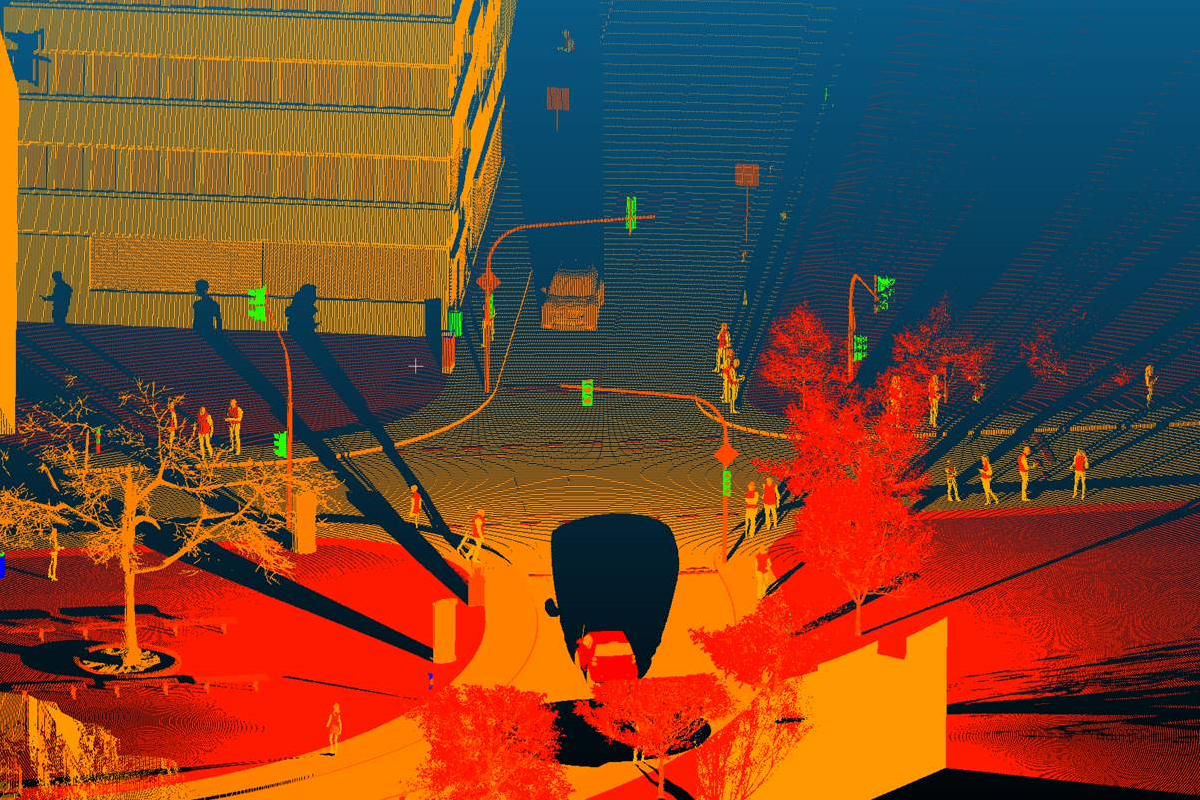

OUTPUT & METADATA

Ultimately, highly relevant synthetic training data are generated. They are easily integratable into our customer’s MLOps and have pixel-accurate labels, such as:

Depth

- Semantic group segmentation

Semantic instance segmentation

2D bounding boxes

3D bounding boxes

Body part annotation

- Skeleton/Pose .json

Environmental description

Behavioral description

Many more…